In the realm of artificial intelligence (AI) and machine learning, there are myriad acronyms and terminology that often become confusing for the layperson.

One such term that has captured global attention in recent years is GPT. You’ve probably heard of GPT3, GPT4, and now the most anticipated GPT5.

But what does GPT stand for, and why has it revolutionized the AI sector? Let’s explore.

GPT: A Brief Introduction

GPT stands for “Generative Pre-trained Transformer.” Broken down, each part of this title reveals an aspect of the technology’s design:

- Generative: This suggests that the model can generate content. It can produce text sequences based on input. When you give GPT an AI prompt, it will generate a continuation of that prompt, trying its best to match the style, tone, and content of the initial input.

- Pre-trained: Before GPT could be used for any specific task, it had to undergo a phase of pre-training where it learns from vast amounts of text data. By “reading” and processing a significant portion of the internet, the model learns grammar, facts about the world, reasoning abilities, and even some level of style and nuance. The result? A machine learning model that has general knowledge about a plethora of topics.

- Transformer: This refers to the architecture or structure of the underlying model. Introduced in a 2017 paper titled Attention is All You Need by Vaswani et al., the Transformer architecture has since become the backbone of many state-of-the-art natural language processing (NLP) models. It is designed to handle sequences of data, like text, and employs a mechanism called “attention” to weigh the importance of different words in relation to a given word in a sequence.

The Evolution of GPT

Developed by OpenAI, the GPT series began with GPT-1 and has evolved through subsequent versions, with each iteration generally bringing enhancements in model size, training data, and capabilities.

The goal has consistently been to improve the model’s understanding of language and its ability to generate coherent, contextually relevant, and often human-like text.

Why GPT Matters

The GPT models, especially the later iterations, have demonstrated a level of language understanding and generation that was previously unprecedented in AI. This has resulted in a range of applications:

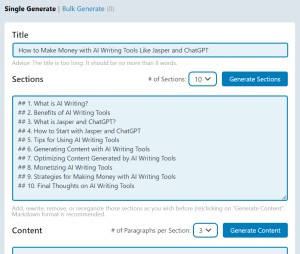

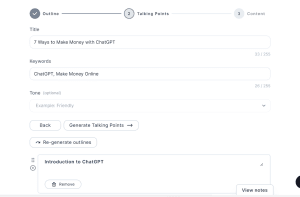

- Content Creation: From drafting articles to generating creative fiction, GPT can assist or even lead in content production.

- Conversational Agents: Improved chatbots and virtual assistants benefit from the language prowess of GPT, making them more adept at handling user queries.

- Education: Assisting students with information, providing tutoring in various subjects, or even helping researchers with literature reviews.

- Programming: With models like OpenAI’s Codex (based on GPT-3) and CoPilot, there’s potential for AI to understand and generate programming code based on human language prompts.

The implications of GPT and models of its ilk are profound. They challenge our understanding of machine capabilities and push us to consider the ethical dimensions of AI systems that can generate human-like text.

In Conclusion

GPT, or Generative Pre-trained Transformer, is not just a set of technical terms but represents a leap in AI’s capacity to understand and generate human language.

As AI continues to evolve, the GPT series stands as a testament to the rapid advancements in the field and a hint of the exciting possibilities on the horizon.